All your arch are belong to us!

All your arch are belong to us!

Calyptia is providing true multi-arch containers images with debug variants

TLDR;

All Fluent Bit container images have been updated to use Debian 11 along with various other improvements. Later we have an example showing how to build multi-arch images on Ubuntu.

The problem

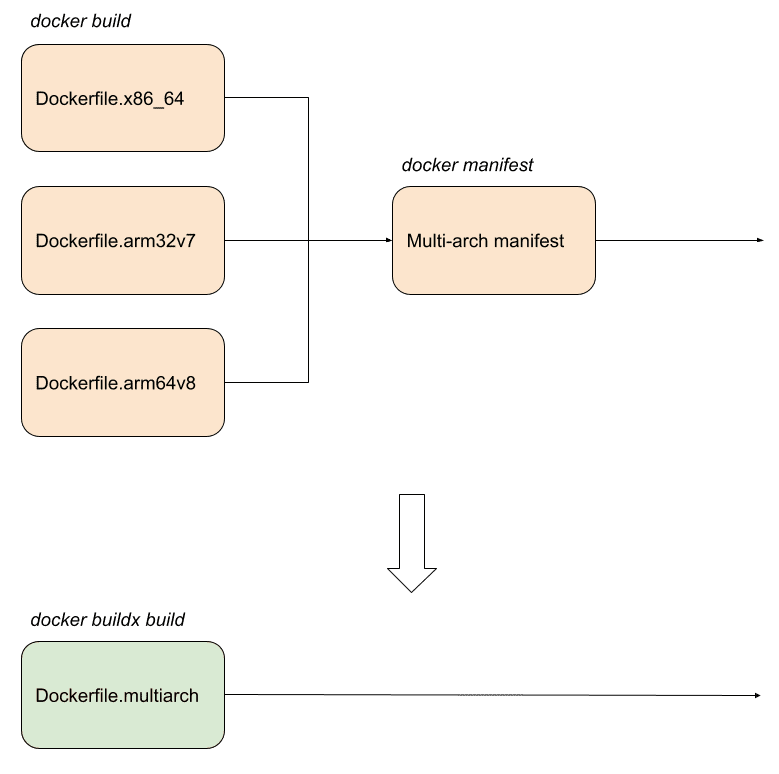

There is a good overview of how to do multi-arch container builds here: the gist is there is a hard way and an easy way. Fluent Bit used the hard way, namely using multiple images built from different definitions then linked together with a multi-arch manifest.

There are obviously reasons why it was done this way, including but not limited to:

Legacy as it was the only option when first attempted.

Attempting to optimise the size for each target.

Using a manifest with discrete image definitions does introduce many other issues though:

Updates to one image (e.g. new libraries for AMD 64 architecture) can be missed on the other images.

Effort multiplied to update each image to a new version, e.g. for Debian 11.

Single architecture only (AMD 64) debug image.

Brittle dependencies using direct copying of libraries rather than package installation.

CI effort and time to build each individual image and then link it into a manifest.

The (possible) solution

A single definition has the benefits of simplifying the overall process and preventing changes being missed or made incorrectly for every architecture. Potentially we lose the benefits of optimisation though for a specific target as well although this needs to be confirmed.

We go from building three separate Dockerfiles, one for each architecture, then linking them together with a manifest to building all three from a single Dockerfile all in one go.

We decided therefore to provide true multi-arch and single definition container images as a new addition to evaluate this and get some feedback compared to the originals. The single Dockerfile replaces the use of three separate files plus a manifest. It follows the original images fairly closely initially so it may be updated over the next few months to further improve things.

How to build multi-arch images

This section uses Fluent Bit as an example but hopefully is of use to anyone wanting to do a similar thing, i.e. build a single Dockerfile for multiple architectures all in one go.

With QEMU set up and buildkit support, you can build all targets in one simple call quite easily and quickly (relatively obviously to your hardware!).

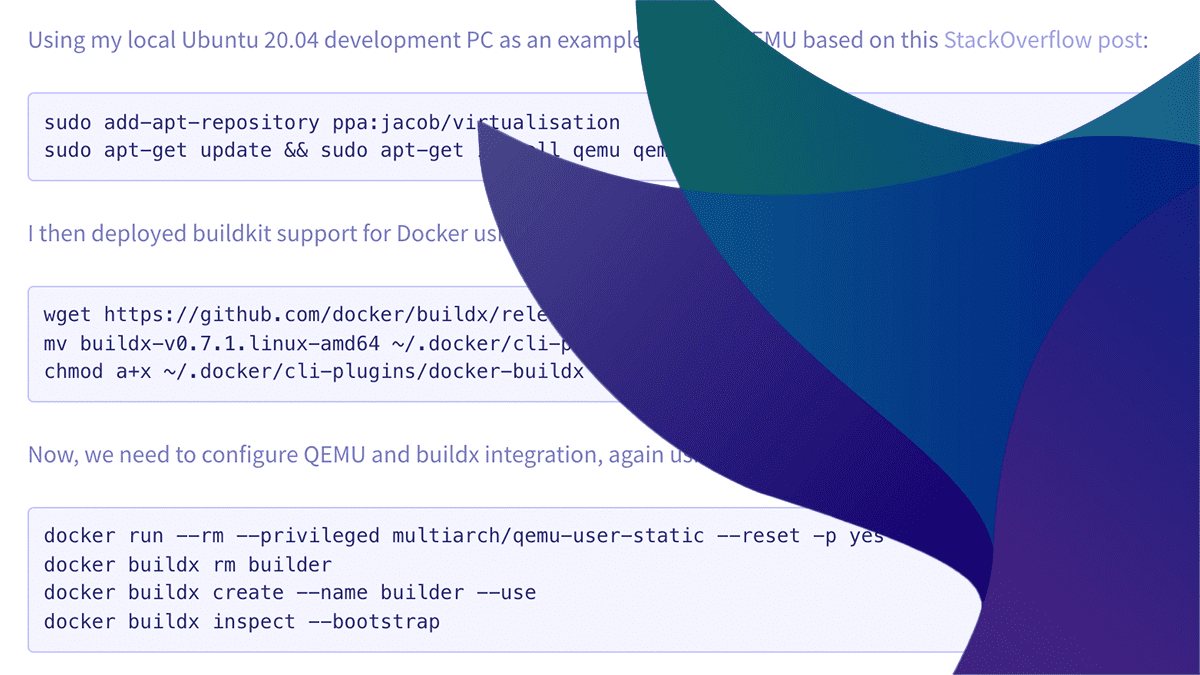

Using my local Ubuntu 20.04 development PC as an example, I set up QEMU based on this StackOverflow post:

sudo add-apt-repository ppa:jacob/virtualisation

sudo apt-get update && sudo apt-get install qemu qemu-user qemu-user-staticI then deployed buildkit support for Docker using the official instructions:

wget https://github.com/docker/buildx/releases/download/v0.7.1/buildx-v0.7.1.linux-amd64

mv buildx-v0.7.1.linux-amd64 ~/.docker/cli-plugins/docker-buildx

chmod a+x ~/.docker/cli-plugins/docker-buildxNow, we need to configure QEMU and buildx integration, again using a StackOverflow post for guidance:

docker run --rm --privileged multiarch/qemu-user-static --reset -p yes

docker buildx rm builder

docker buildx create --name builder --use

docker buildx inspect --bootstrapOnce we have that we can now build all in one go from the root of the Fluent Bit git repository:

docker buildx build --platform "linux/amd64,linux/arm64,linux/arm/v7" -f ./dockerfiles/Dockerfile.multiarch --build-arg FLB_TARBALL=https://github.com/fluent/fluent-bit/archive/v1.8.11.tar.gz ./dockerfiles/An unrelated benefit of using buildx is that multi-stage builds skip targets not required and it has other benefits, e.g. extra mount types (SSH, cache, etc.) plus for me generally seems to build faster than without.

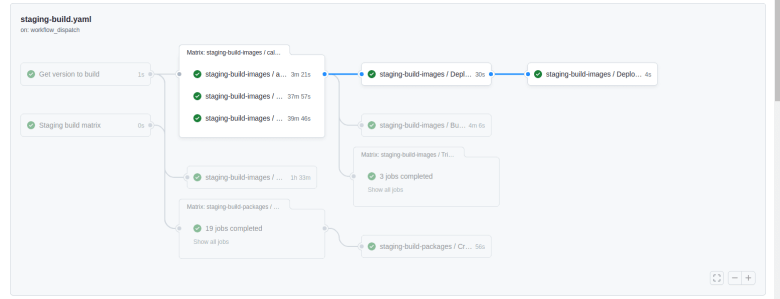

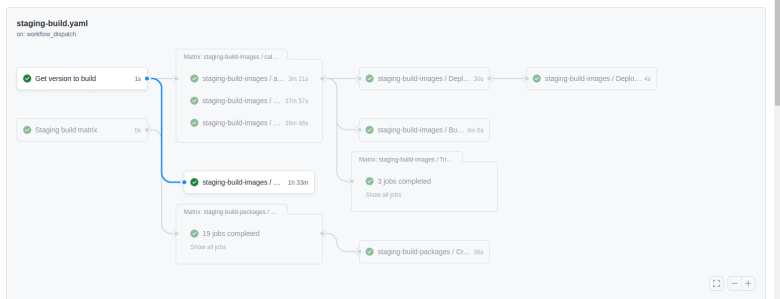

This approach simplifies the CI set up to do all this in Github actions. Instead of multiple matrix jobs with a separate job for the manifest we can do it all in one go.

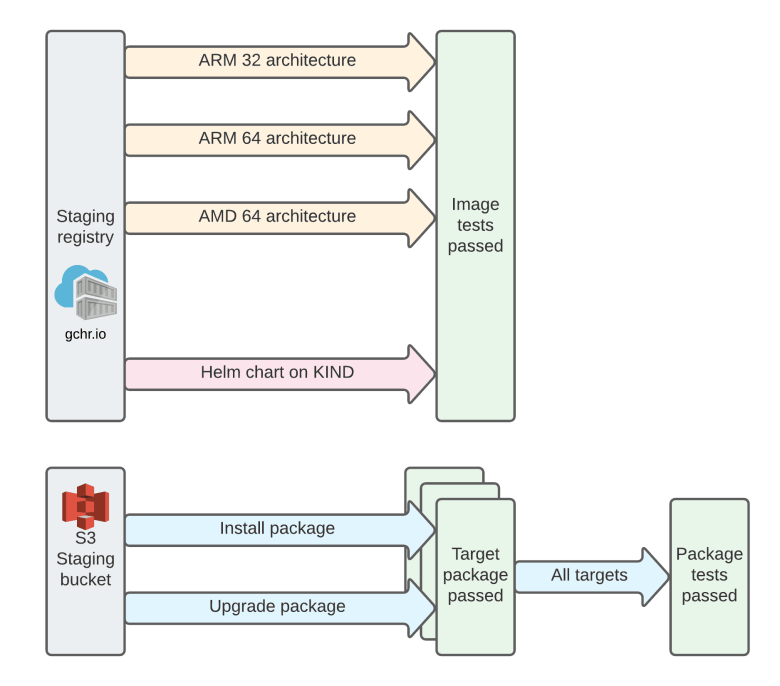

The above shows the Fluent Bit staging workflow introduced in a previous article with the longer highlighted pipeline showing the creation of the original containers and below the single job highlighted as the new multi-arch build and push.

Package installation

The original images try to optimise their size by copying libraries from a builder image to the production image, e.g. a small snippet:

COPY --from=builder /usr/lib/x86_64-linux-gnu/sasl /usr/lib/x86_64-linux-gnu/

COPY --from=builder /usr/lib/x86_64-linux-gnu/libz* /usr/lib/x86_64-linux-gnu/

COPY --from=builder /lib/x86_64-linux-gnu/libz* /lib/x86_64-linux-gnu/

COPY --from=builder /usr/lib/x86_64-linux-gnu/libssl.so* /usr/lib/x86_64-linux-gnu/

COPY --from=builder /usr/lib/x86_64-linux-gnu/libcrypto.so* /usr/lib/x86_64-linux-gnu/

COPY --from=builder /usr/lib/x86_64-linux-gnu/libgcrypt.so* /usr/lib/x86_64-linux-gnu/The main reason for this is to improve security and image size by using the distroless base image. However, it is not consistent in the original images as the ARM 32 image uses package installation whereas the others do not plus the two ARM images are not using a base distroless image.

As you can imagine, this quickly gets unwieldy and is very brittle to missed or modified dependencies – issues may only be found when the container is run and possibly only run in a particular way or with a particular config. It can also inhibits some security or other CICD tooling that checks packages installed.

To simplify things for this developer preview we therefore decided to switch to standard package installation across the board:

RUN apt-get update &&

apt-get install -y --no-install-recommends

libssl1.1

libsasl2-2

pkg-config

libpq5

libsystemd0

zlib1g

ca-certificates

libatomic1

libgcrypt20

&& apt-get clean

&& rm -rf /var/lib/apt/lists/*This may have an impact on image size but does have a lot of other benefits as you can see.

Security

The main downside to this approach is that we are using distroless images currently for the existing AMD64 containers (but not the other architectures) which has significant security benefits. It does not provide a shell or package managers and generally limits dependencies to the bare minimum required for the application.

One area we would be quite interested in feedback is the choice of package installation versus direct copying of libraries. There is the benefit to security but the downside is the possibility of missing a dependency particularly if they are dynamically loaded. Each time a dependency changes this may affect the libraries we need to copy so is a non-trivial thing to maintain and test particularly for transitive dependencies.

An option is to provide a distroless base image with the additional dependencies installed using the same Bazel build approach that Google uses. Another option is to use the distroless base image with a custom script that can copy the different libraries required for each target then run that.

Some good posts on background in this area are below that go over the pro/cons of distroless versus other options:

What do you think? Please let me know.

Verification and testing

One area of significant improvement is the addition of testing across the board for all container image architectures, this was started in the previous work to provide a staging workflow and continues here. With the original approach it is quite easy to either miss a change in a specific architecture (e.g. a new dependency is added or an existing one changes) or a change is not tested and fails on a specific target. There are recent examples of both of these unfortunately and there is also more work to improve ongoing – testing is never complete!

Now, we test all architectures and deploy the Helm chart as well as a sanity check. This includes the multi-arch containers which was pretty easy to add by just adding another call of the reusable job we created previously.

Conclusion

Calyptia has provided a new set of container images built from a single multi-arch definition including debug variants for all supported (Linux) architectures: http://ghcr.io/fluent/fluent-bit/multiarch

The existing container images (and AMD 64 debug only image) are still present. The new images will be evaluated for performance, security and other requirements so are still developer preview but we would like to hear feedback from the community on them, hence this article.

Please give the new containers a go and let us know any feedback you have.

Keep an eye out for upcoming tech talks by the maintainers in this and similar areas too.

You might also like

Fluent Bit and Fluentd – a child or a successor?

Fluent Bit may have started as a sibling to Fluentd, but it is fair to say that it has now grown up and is Fluentd's equal. Learn which is right for your needs and how they can be used together.

Fluent Bit v3 gives users greater control of their data and telemetry pipelines

New release allows filtering of Windows and MacOS metrics, supports SQL for parsing logs, adds support for HTTP/2, and more.

Send distributed traces to AWS X-Ray using Fluent Bit

Distributed tracing helps identify performance bottlenecks, optimize resource utilization, and troubleshoot issues in distributed systems. In this post, we'll guide you through the process of sending distributed traces to AWS X-Ray using Fluent Bit.